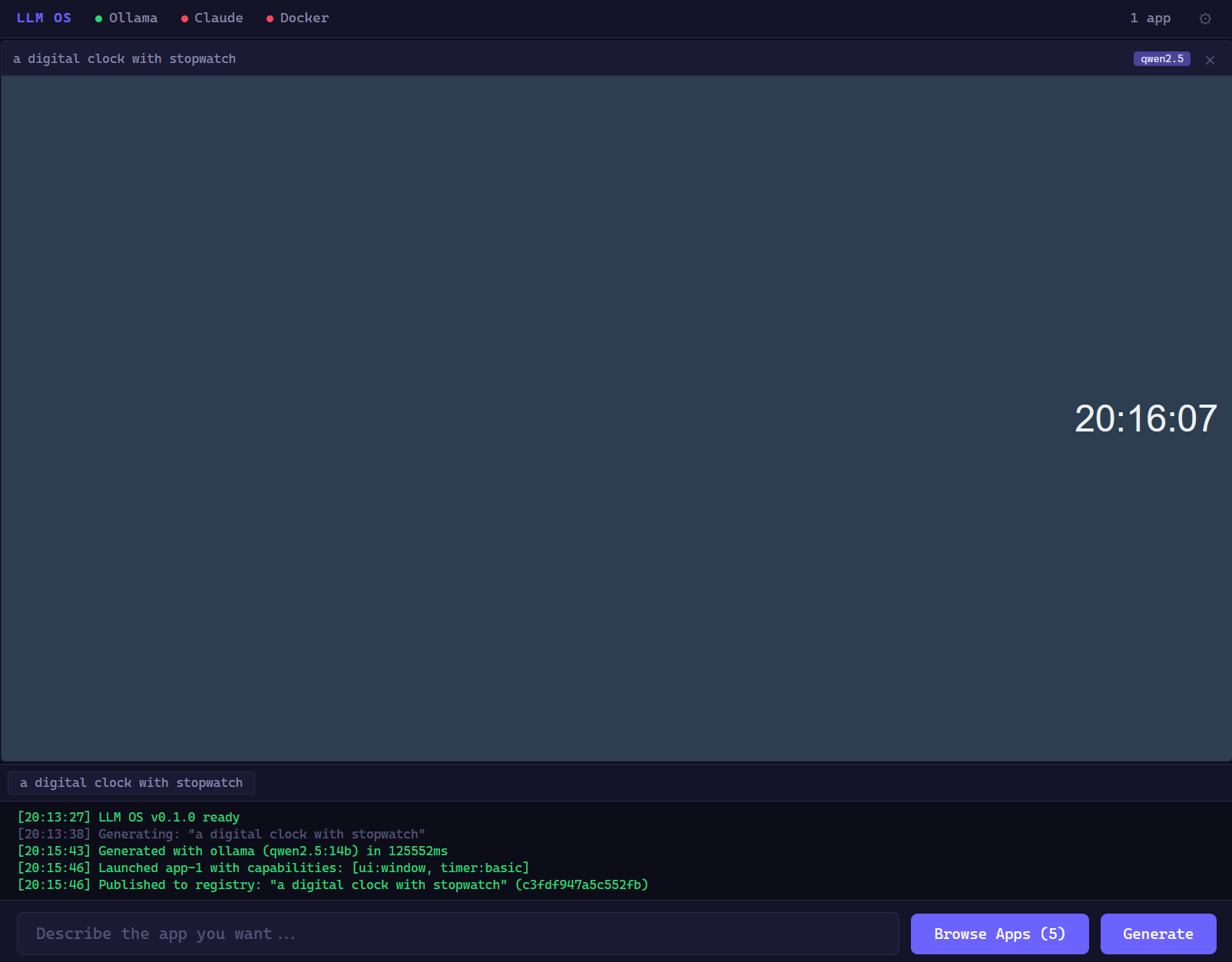

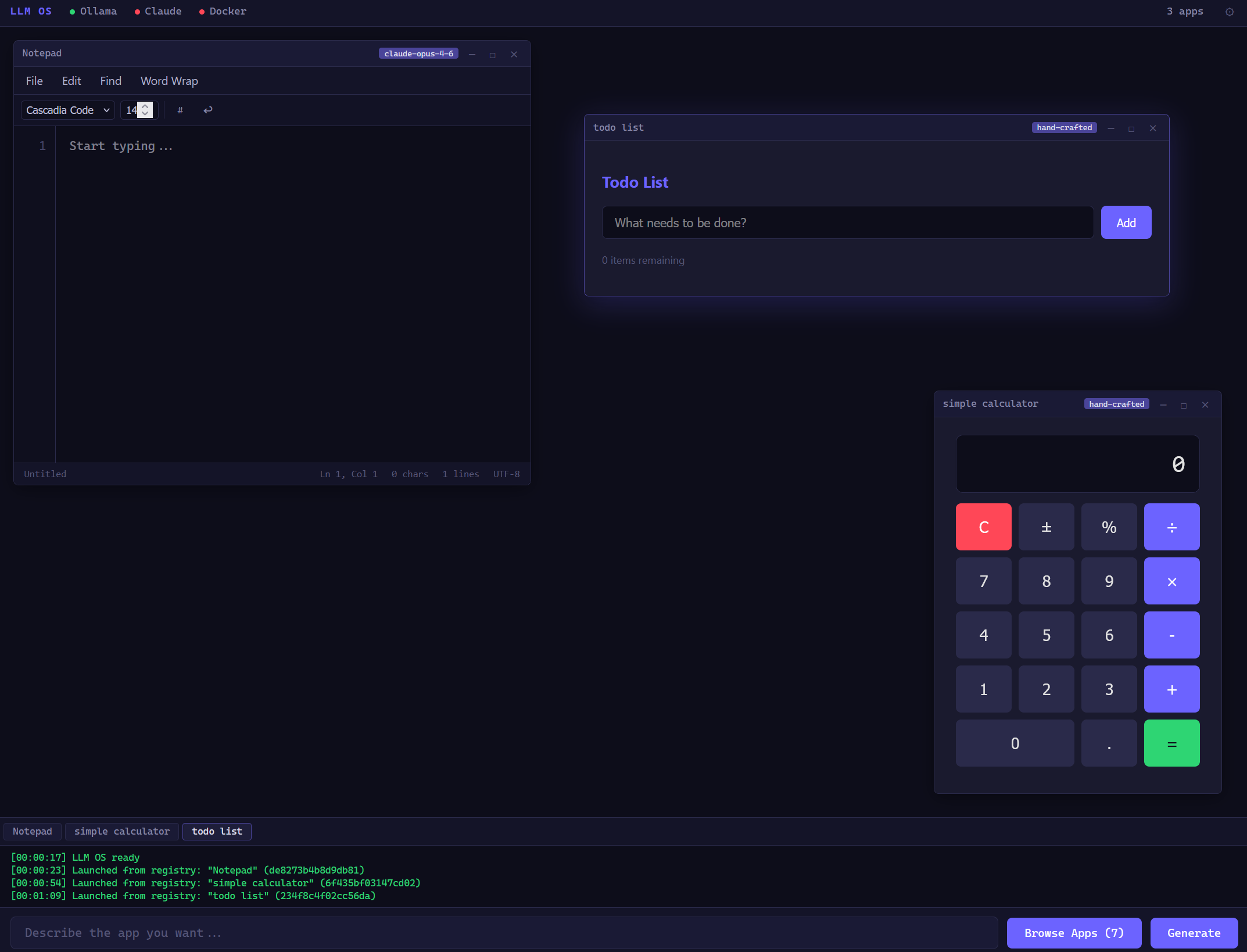

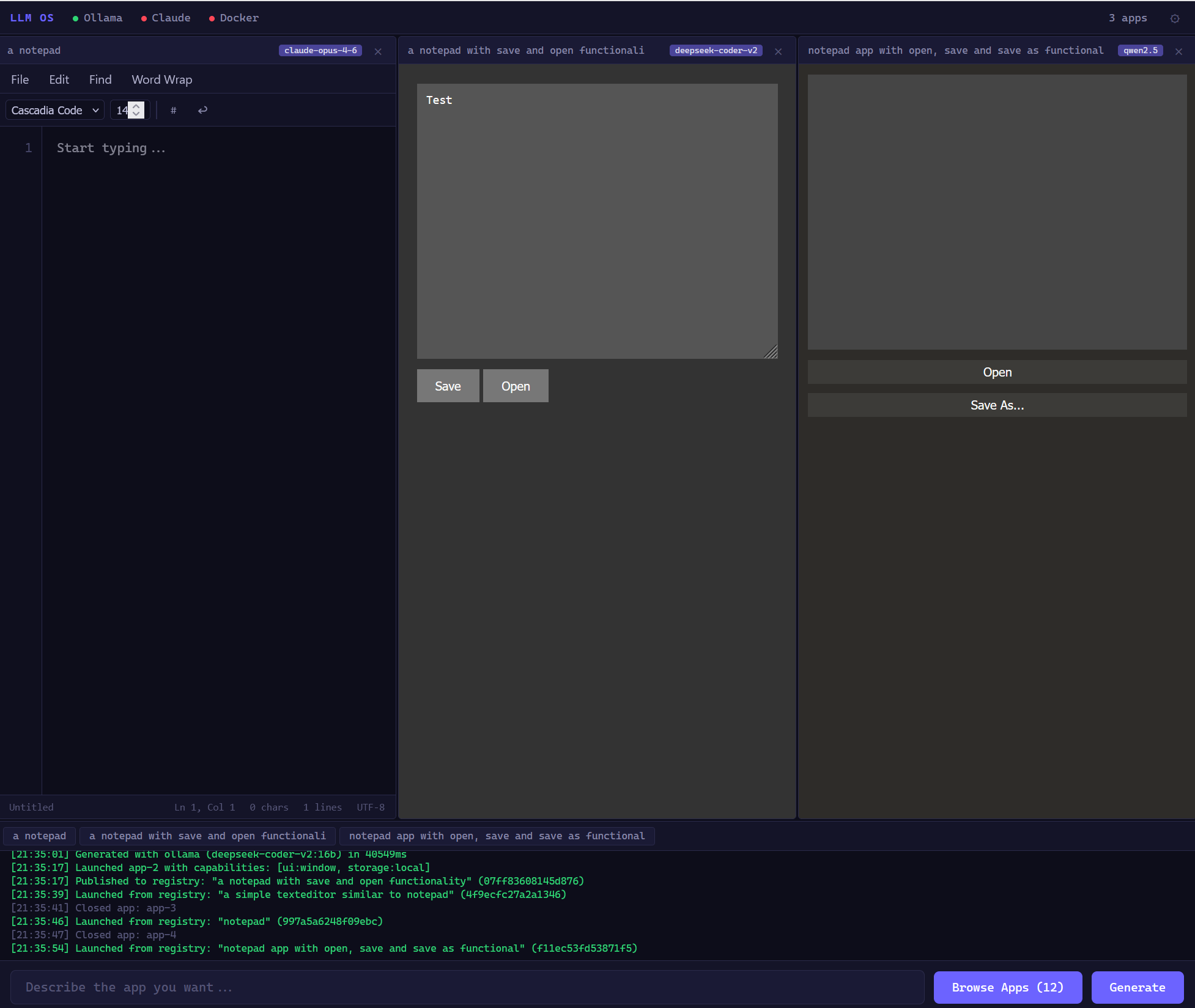

Early Progress

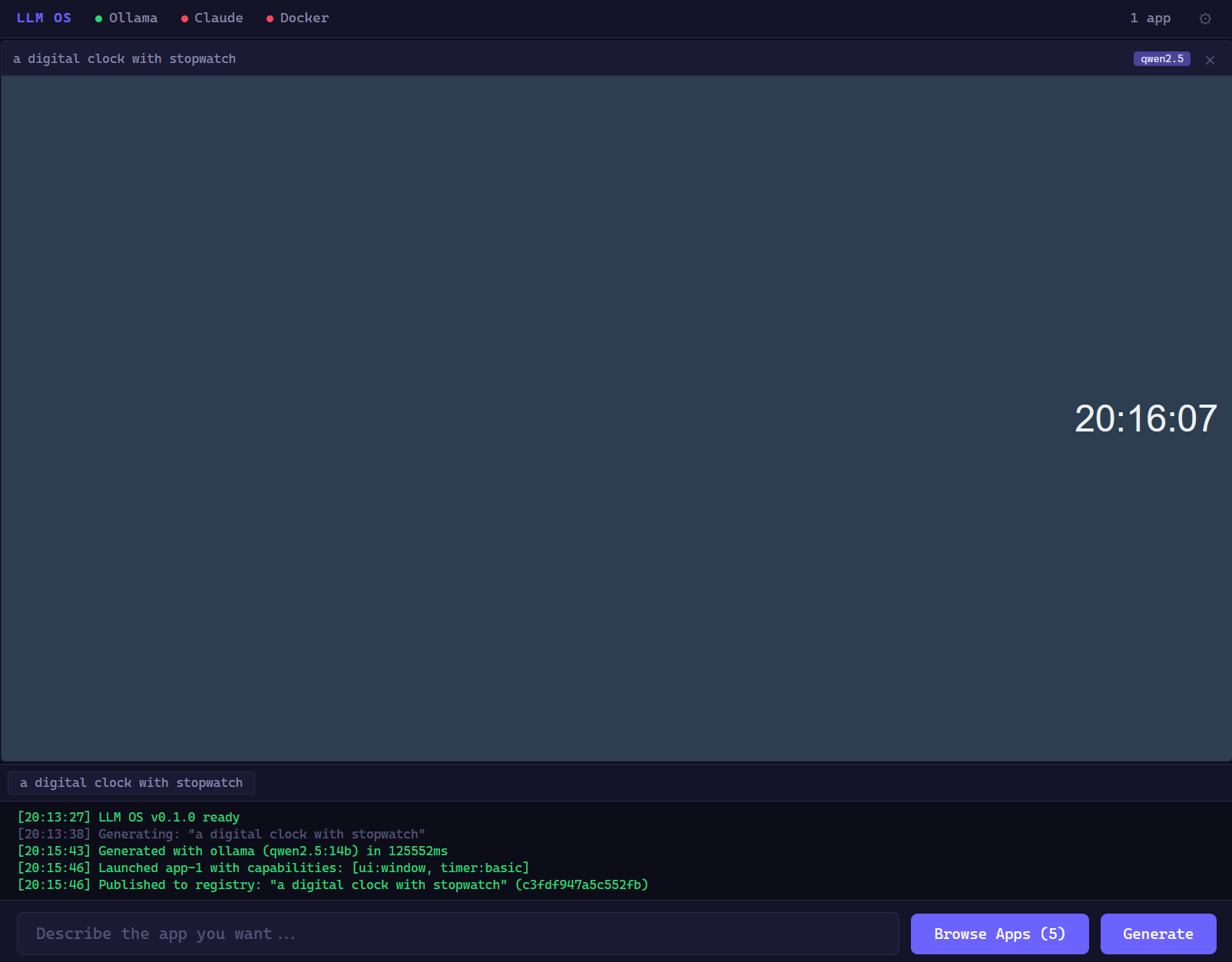

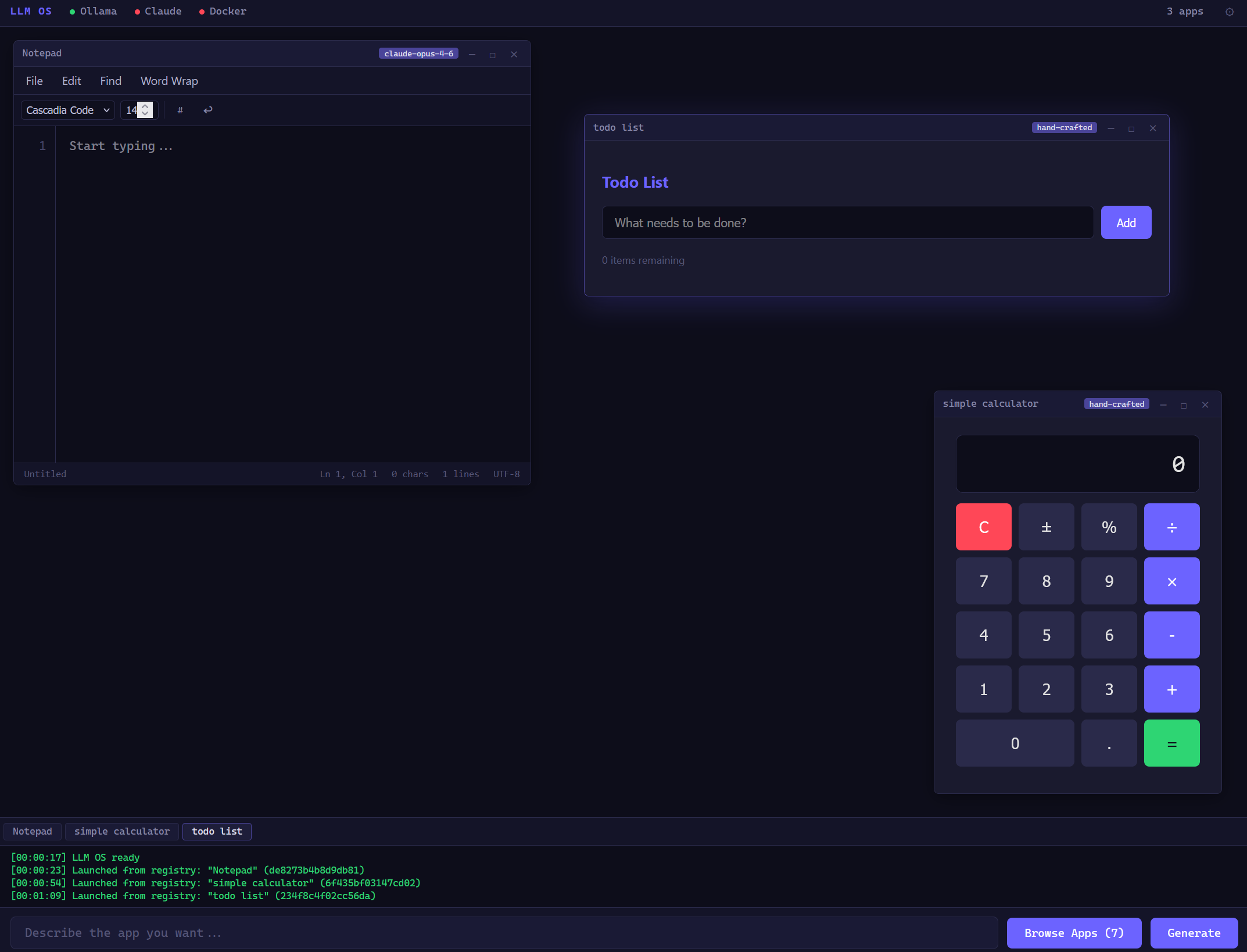

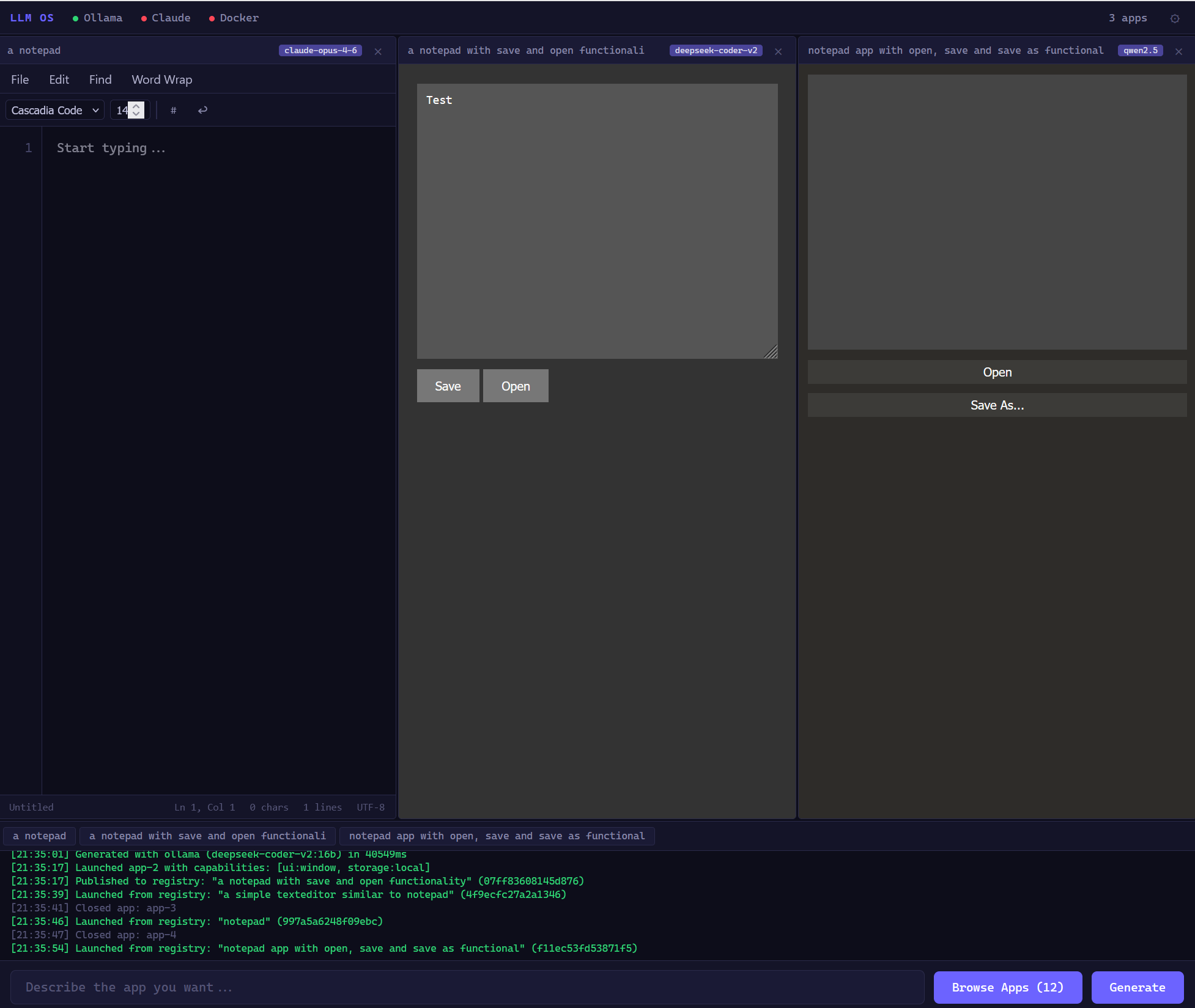

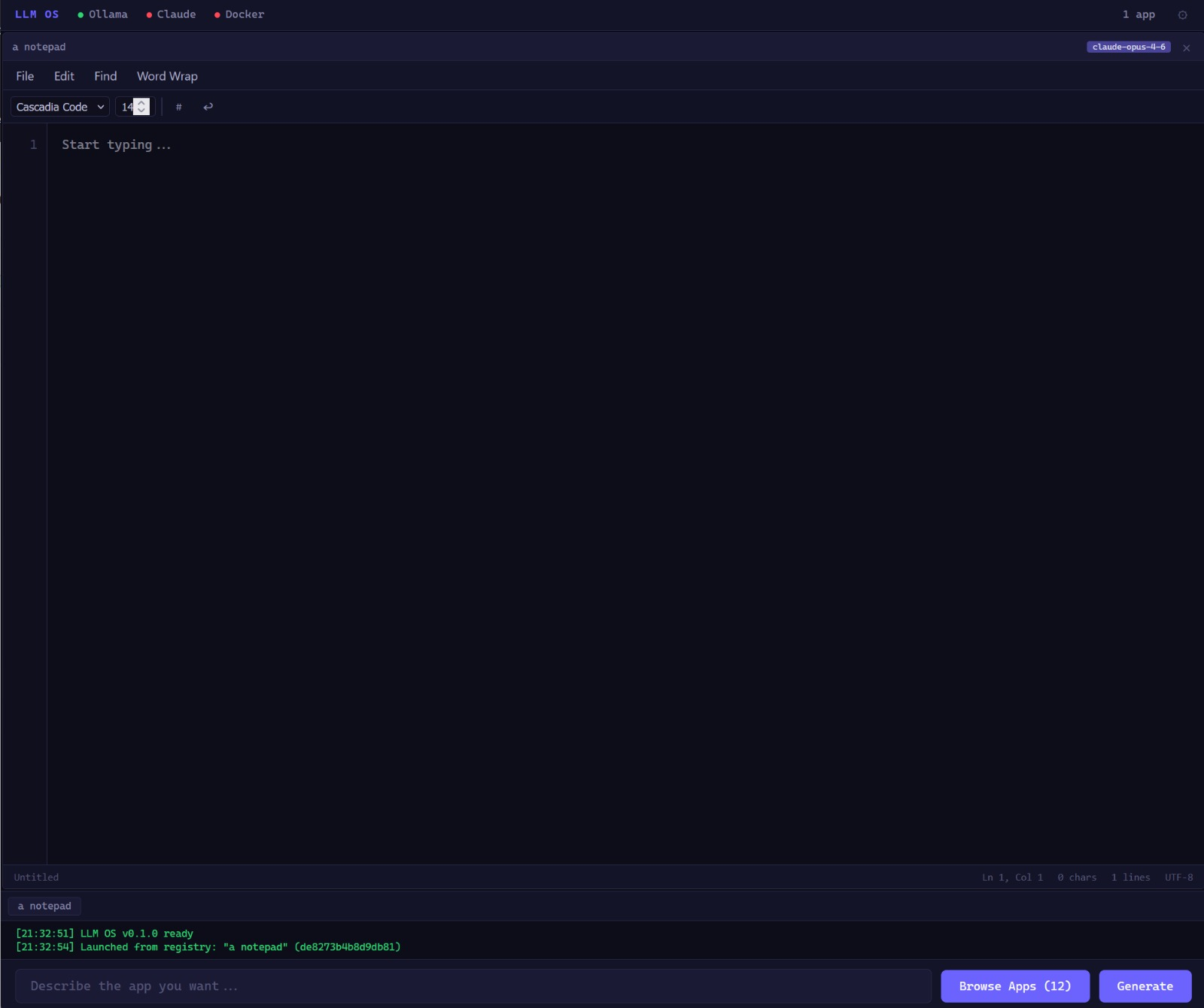

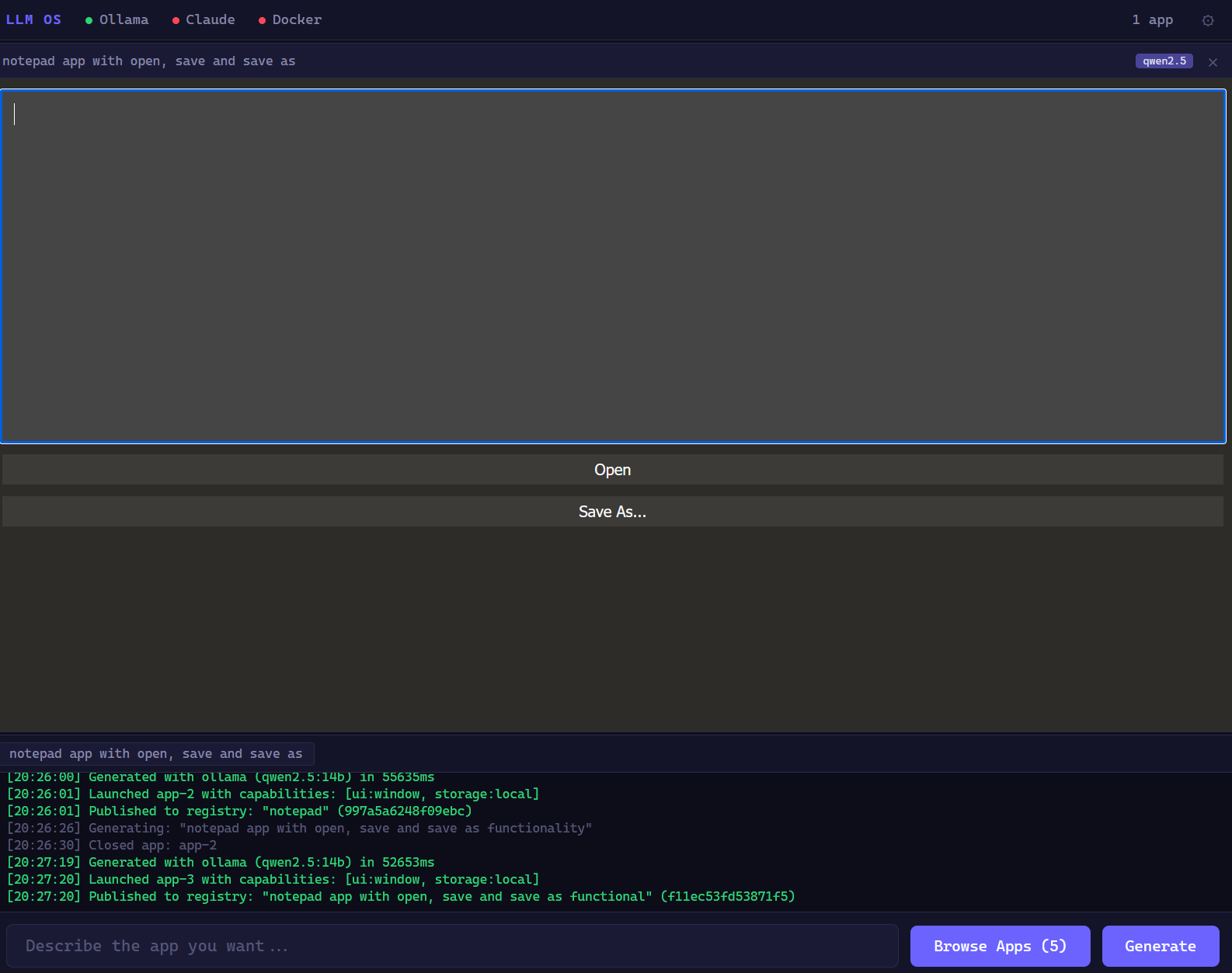

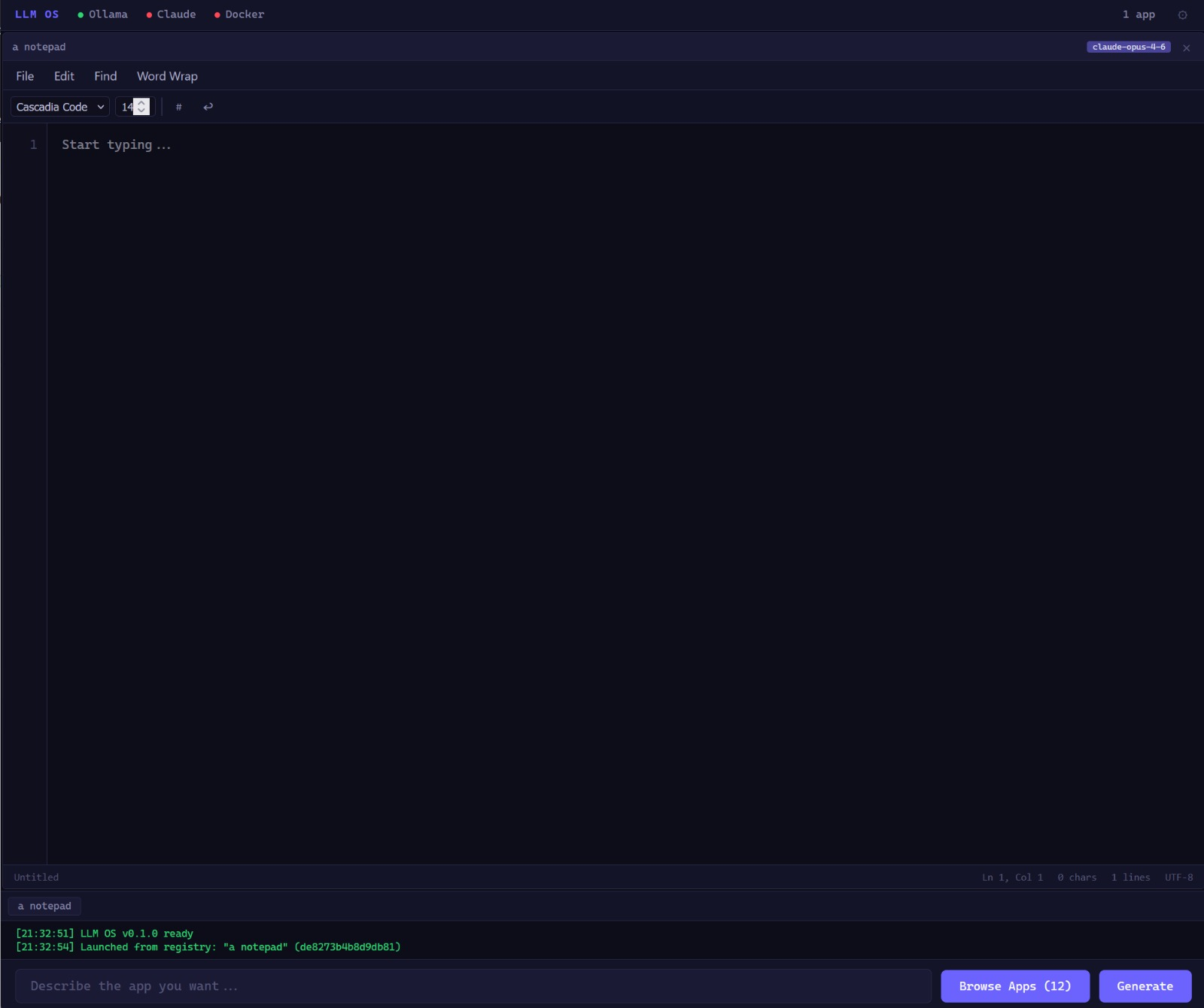

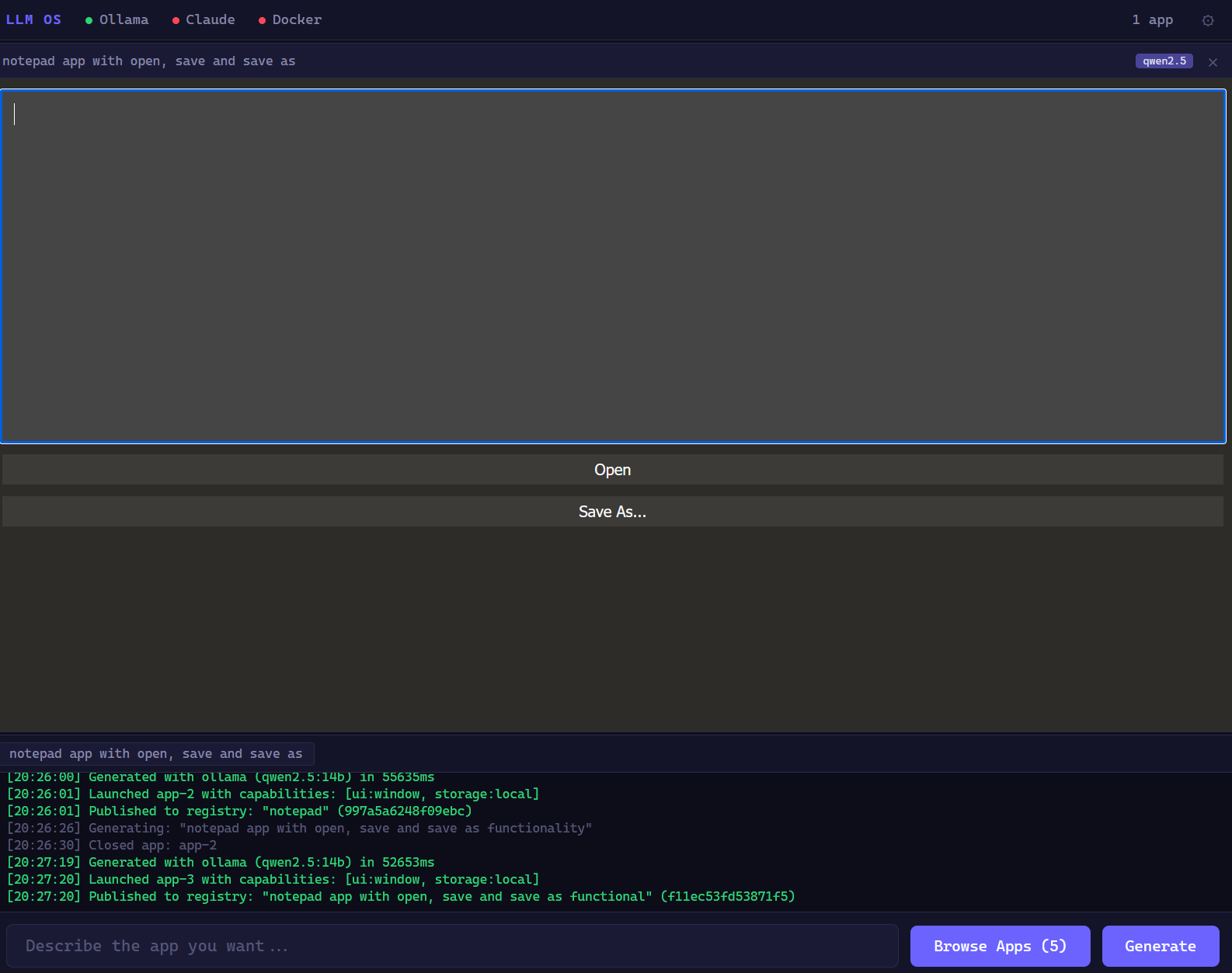

Rudimentary but real. These are actual screenshots from the running prototype — not mockups.

No apps ship pre-installed. Describe what you need in plain language, and the OS generates it, sandboxes it, and runs it — getting smarter as more resources become available. Inspired by Karpathy's LLM OS concept.

These aren't guidelines. They're enforced at every layer — deterministic scans, AI review, and human oversight.

No telemetry. No tracking. No data exfiltration. Generated apps run in sandboxes with strict capability gates. When in doubt, deny access. User privacy is non-negotiable.

No artificial limits. No paywalls. Users can generate and run any software they want — as long as it doesn't harm others. The OS serves the user, not the other way around.

Use it freely. Adapt it as you see fit. But if you benefit from it — contribute back, even a little. Code that's contributed must not damage the core idea.

These rules aren't perfect. Neither is this code. We can always improve — as long as the core intent isn't violated. Ship working code, iterate, improve.

The OS scales its intelligence to the resources available. More models = smarter routing, better apps.

Keyword matching classifies prompts. Known app templates (SSH, browser) still work. The OS is functional without any LLM.

A small local model classifies prompts: type, complexity, template, title. Runs on a single CPU core. Regex fallback if it fails.

Generate complete applications locally. No cloud API needed. Resource monitor auto-selects the strongest available model.

Claude Opus, GPT-4o, or any OpenAI-compatible API. Request by name: "build a chat app using opus". Model tier system auto-escalates for complex tasks.

Rudimentary but real. These are actual screenshots from the running prototype — not mockups.

From natural language to a running app in seconds. Every step is security-gated.

"Make me a todo list with categories" — plain language, no code required.

A small LLM classifies your prompt — type, complexity, template match, model hint. If no LLM is available, regex takes over. Say "using opus" or "with haiku" to pick a specific model.

Resource monitor probes available models and picks the strongest one for your task. Supports Ollama, Claude, OpenAI, OpenRouter, Groq, and any compatible API. The system gets smarter as more resources become available.

Deterministic regex/AST scan blocks eval(), dynamic imports, parent frame access, and encoded payloads. No LLM in the loop — no recursive injection.

The app declares what it needs (storage, timers, network). You review and approve each one. The app gets nothing you don't explicitly allow.

Three isolation tiers: iframe with strict CSP, WASM sandbox with memory limits, or Docker containers for process apps. HMAC-SHA256 signed capability tokens. Every SDK call is validated against your approved capabilities.

Layered design — every layer gets replaced as we move toward an ephemeral OS.

What LLMs can generate today, and what becomes possible as models improve. Honest timelines, not hype.

LLM OS isn't trying to replace your desktop. Here's where it shines.

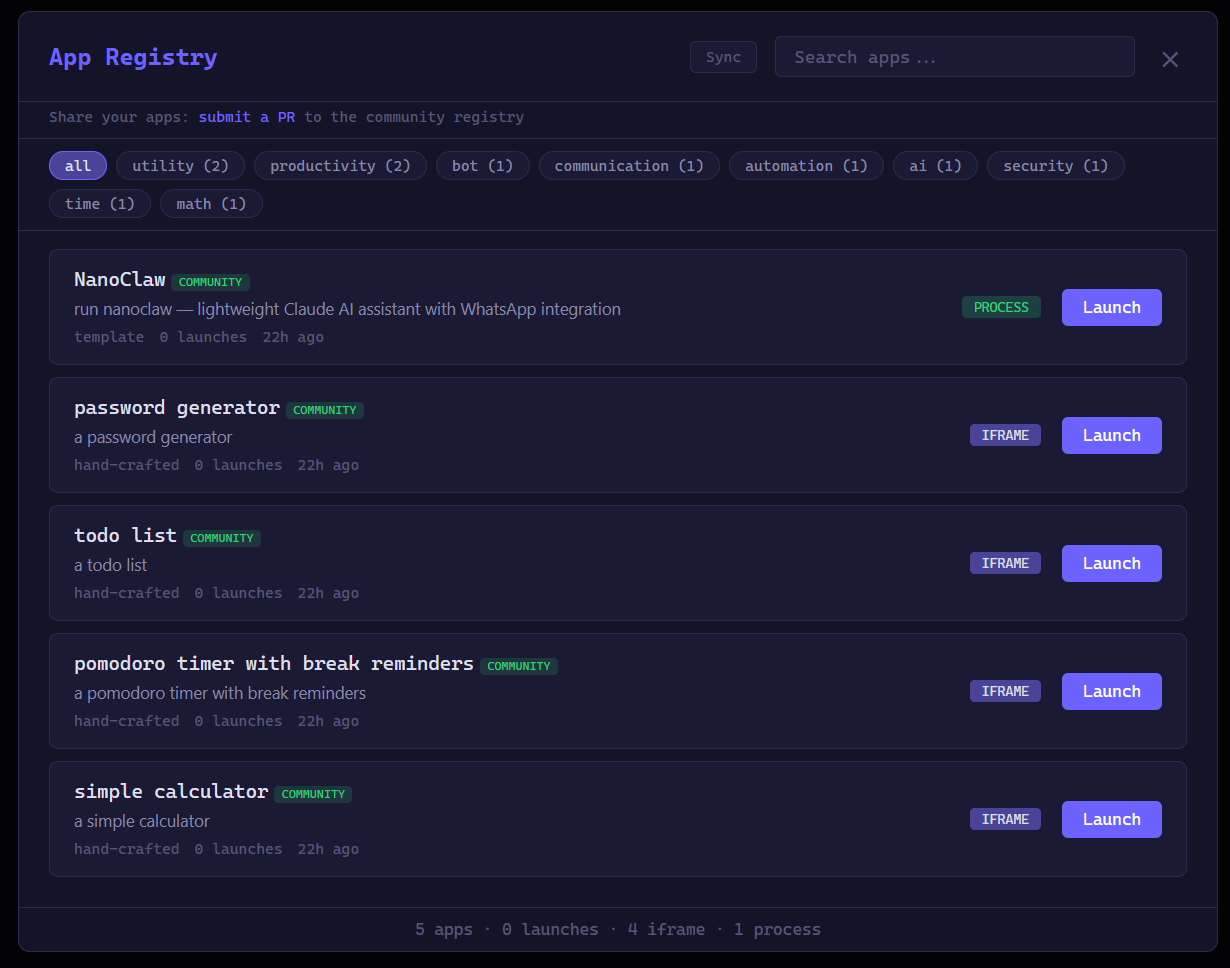

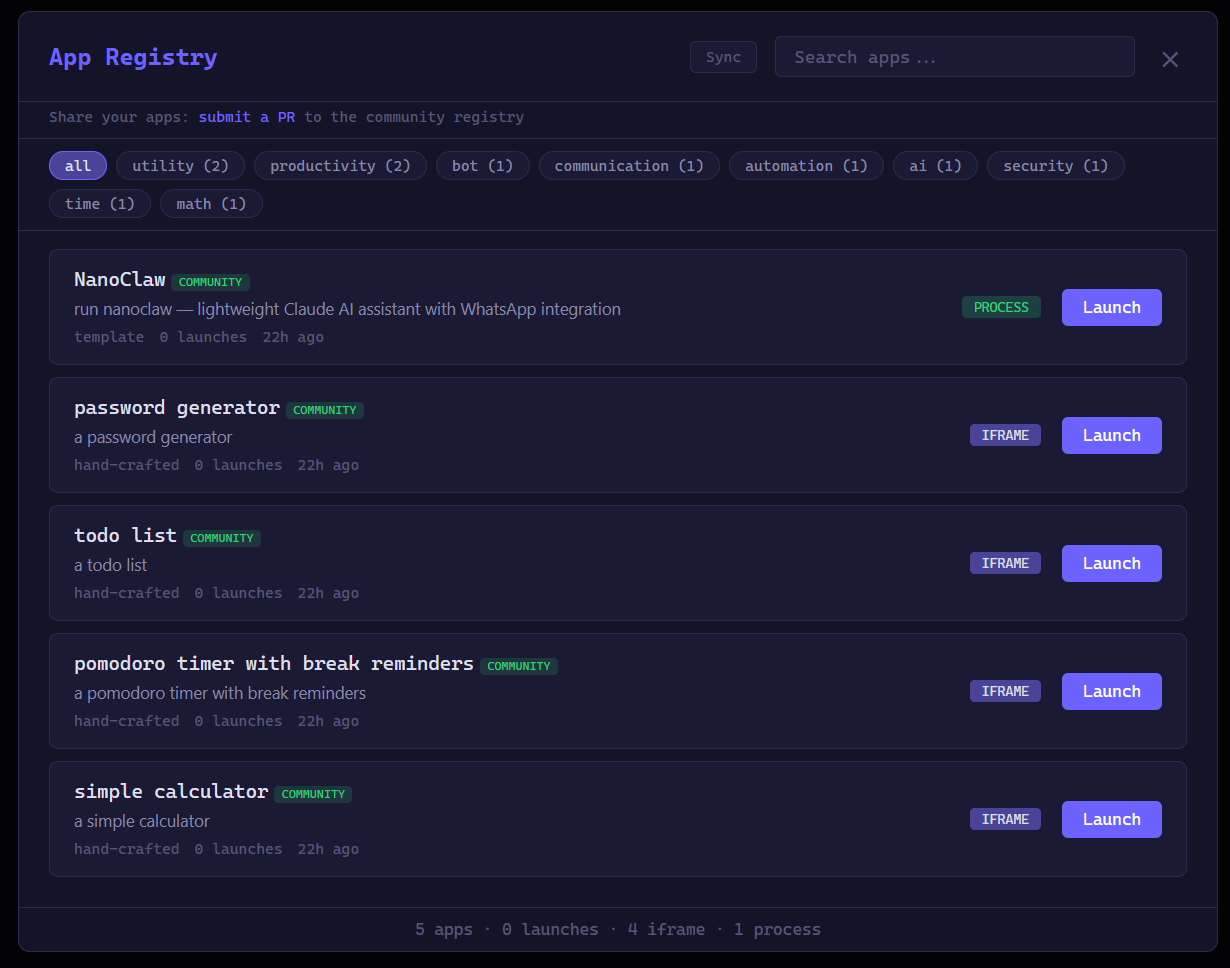

Need a CSV converter? A color picker? A timer with specific intervals? Describe it and it exists. No searching app stores, no installing, no accounts. Use it once and discard it — or save it to the registry.

Test an idea in seconds. "Make a dashboard that shows CPU and memory usage" — you have a working prototype before you'd finish setting up a project. Iterate with follow-up prompts.

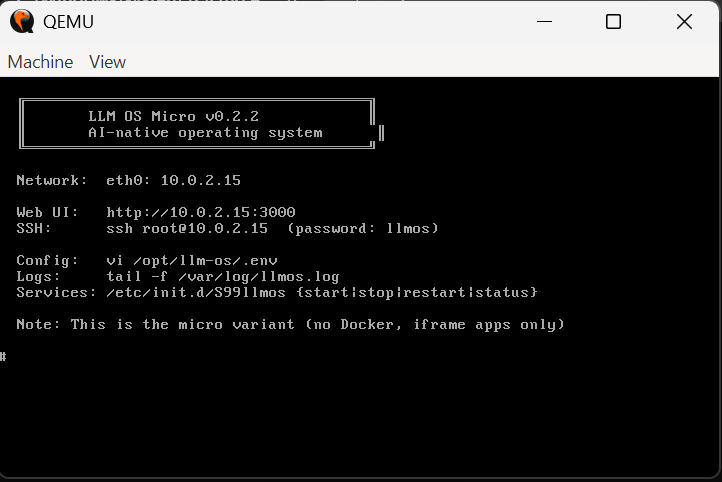

Boot a 50MB VM image on any hypervisor. Point it at a local Ollama instance. Generate and run apps without internet. Useful for air-gapped environments, IoT, or just privacy.

See how different models generate the same app. Understand what LLMs are good at and where they fail. A sandbox for exploring AI capabilities with real, runnable output.

An OS that adapts to you. Your profile defines what apps to generate at boot, your preferences, your workflows. Every instance is unique. The prompt is the source code — fork anyone's setup.

A real testbed for capability-based security, sandboxing, prompt injection defense, and LLM code generation safety. Every layer is open for inspection, modification, and attack.

Three paths. Pick the one that fits.

Open the repo in VS Code. Claude Code reads CLAUDE.md automatically and knows the entire project.

gh repo fork DayZAnder/llm-os --clone cd llm-os code . # Tell Claude Code what to build: # "Add resource quotas for Docker apps" # "Add LLM-driven system config" # "Audit the security"

Copy a ready-made prompt from CONTRIBUTING.md into your preferred AI assistant.

gh repo fork DayZAnder/llm-os --clone cd llm-os # Open CONTRIBUTING.md # Copy a component prompt # Paste into your AI tool # Each prompt includes values context

Fork, read the README, run the prototype, and pick an issue.

gh repo fork DayZAnder/llm-os --clone cd llm-os cp .env.example .env node src/server.js # Open http://localhost:3000 # npm test (355 tests, zero deps) # Supports Ollama, Claude, OpenAI, # OpenRouter, Groq, Together, vLLM

Three layers, no single point of trust. Every contribution is checked.

Regex-based static analysis runs locally and in CI. Detects telemetry, sandbox weakening, privacy violations, tracking code. Blocks merge on critical findings.

Claude reviews every PR diff against the core values. Posts findings as comments. Catches subtle violations that regex can't see.

PR template requires values self-certification. Maintainer has final authority on edge cases. No automated system is trusted alone.

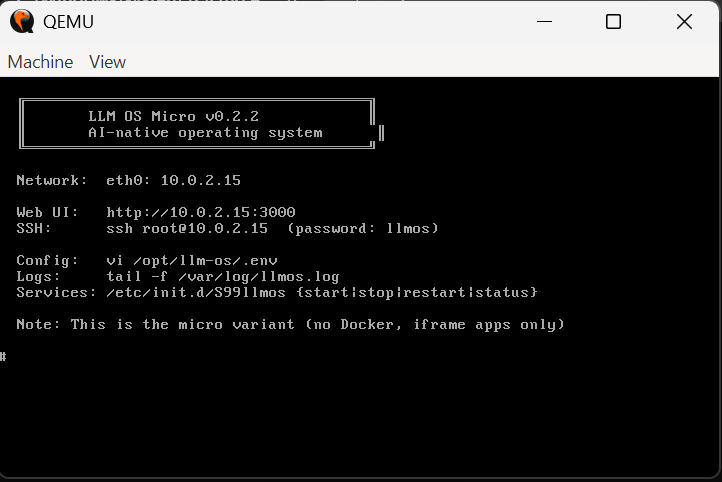

Boot a full LLM OS instance in your hypervisor. Three variants, three formats — pick what fits.

Full features, headless. Docker support for process apps. SSH access.

QCOW2Boots directly into full-screen LLM OS UI. Everything from Server plus kiosk browser.

QCOW2root / llmos, then open http://<vm-ip>:3000 in your browser.

Configure your LLM provider: llmos-config set OLLAMA_URL http://your-ollama:11434 or set ANTHROPIC_API_KEY, OPENAI_API_KEY, etc.

Supports Ollama, Claude, OpenAI, OpenRouter, Together, Groq, vLLM, and LM Studio.

Change the default password on first login!

The next operating system won't ship apps — it'll generate them.

If that future interests you, start building.

Quick links: Screenshots · Vision · Get Started · Contribute